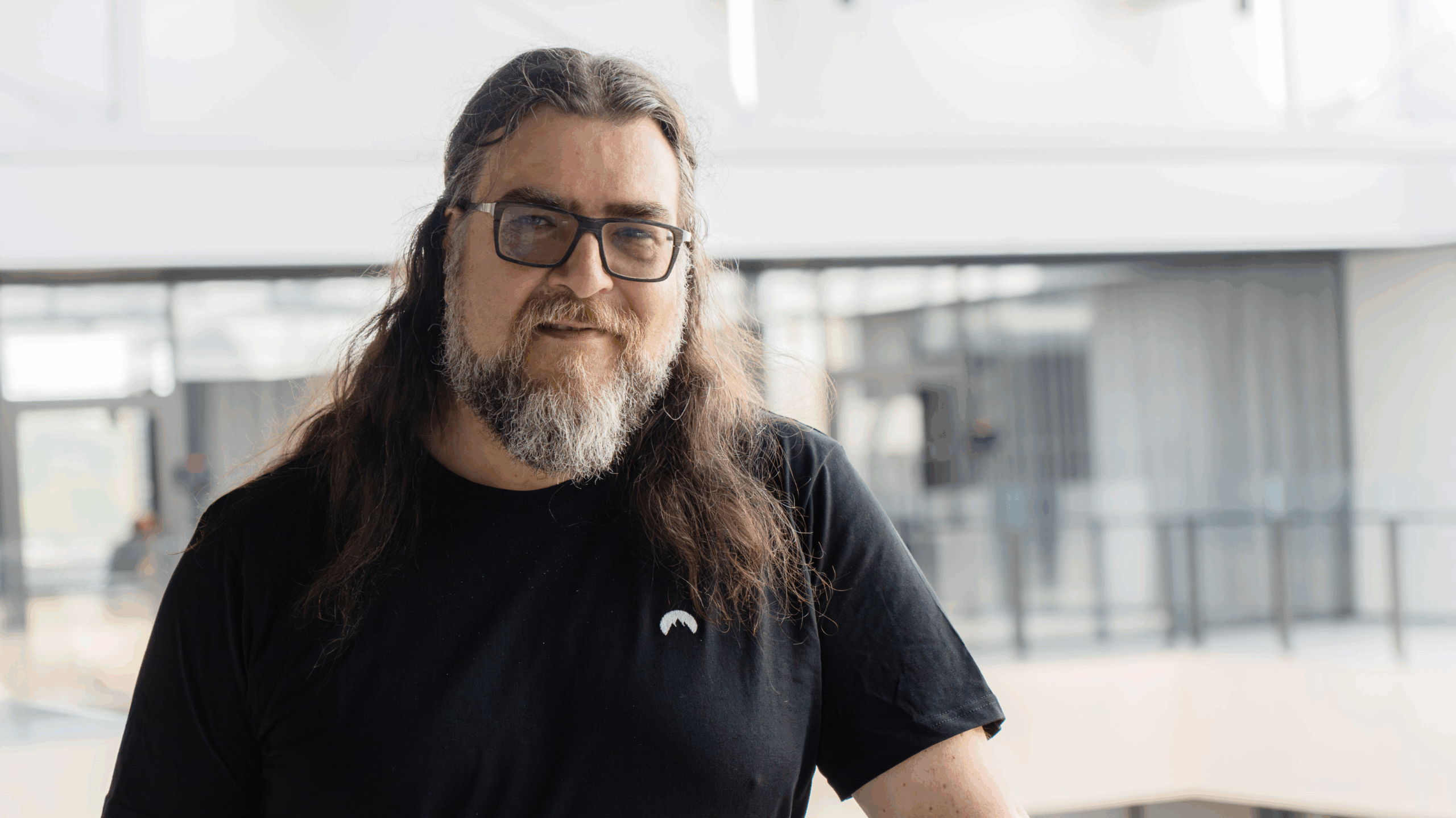

Manuela Maria Veloso, head of J.P. Morgan AI Research and the Herbert A. Simon University Professor in the School of Computer Science at Carnegie Mellon University, speaks to Global Finance about AI development and use.

Global Finance: How do you describe artificial intelligence (AI)?

Manuela Maria Veloso: AI, in some sense, is a young science that started with this tremendously ambitious goal of trying to capture and precisely define all aspects of intelligence and learning so that a machine could then reproduce them. When we started about 50 years ago, we didn’t have the power of GPUs [graphics processing units], the cloud or mobile, and we are still understanding how these computing platforms enable capturing the features of intelligence.

AI is also a field of components, and computers need to do good text, language, and have a good understanding of speech. All of that is what we call the perception side or the data side of AI, in which you try to have algorithms input data into some reasoning platform. Then you have the reasoning part, in which algorithms compute the shortest routes or optimize for matches. You search for actions based on the input and to achieve specific goals. Finally, the last aspect of intelligence is the actual execution. We think, and we execute. AI is, in fact, the ability to use this input to reason about it and execute.

GF: Are AI systems complete?

Veloso: It’s a mistake to think that AI systems are done. When we make an AI system that classifies applicants into loan/no loan, it uses data with whatever parameters the developers set. Sometimes AI is biased and makes wrong decisions, but not if we use the right data and parameters and if the AI learns from data decisions. We want an ability for such feedback into AI. When the system continues making wrong decisions, there’s a lack of continuous learning.

GF: Should AI be regulated?

Veloso: I do believe that regulation is needed. We cannot buy many products without someone approving them. We have a society that protects people by having approval processes for products made by scientists and developers. Theoretically, regulations are needed, but the hard part is that AI is available everywhere. Anyone can open a computer, access the cloud, and develop their own algorithms, which makes controlling what can and can’t be done difficult. That’s how people misuse the power of AI.

While we need regulations in one way or another, in practice, regulating these tools is a challenge. For AI, the regulations should be about testing and not necessarily the understanding; the understanding comes as a function of the testing. There isn’t enough dialogue on how we test the rules we develop and what you can and can’t do, though.

GF: How would you describe AI’s presence in corporate finance?

Veloso: There are deployed applications for all sorts of marketing decisions based on features. For credit cards, we get millions of applications a year; and for a long time, computers and algorithms would analyze all the features required to decide. Because of regulations, humans review and verify big decisions; but we use algorithms to detect possible fraud. The finance world has a lot of automation because of the scale and need to make quick decisions.

We use AI for a variety of purposes, like chatbots and classifying and routing emails to the people who are best able to respond. We use natural language to automatically extract knowledge from public documents. We can process checks automatically and extract the necessary information from images.

GF: How does AI help transform an organization?

Veloso: AI can help you support your decisions by simulating all possible scenarios. AI helps when you want to decide something, like whether and where to open a new branch, for example. With AI, you’re able to simulate different scenarios to determine the ideal location. Ideally, one day, everybody would like an AI assistant that can optimize their problem-solving and simulate scenarios.